Walk into a data center and it feels like everything runs on air and lights. But the real work happens in the cables. Think of data center cables like city roads during rush hour. They quietly move traffic (data) where it needs to go, even when demand spikes fast.

In 2026, that demand keeps rising. AI training, cloud growth, and bigger networks mean you need more bandwidth, tighter timing, and more connections per rack. So network cabling turns into a big deal, not an afterthought.

This guide explains how cables get chosen and where they go. You’ll see the main cable types, how they’re laid out in common data center setups, and how similar ideas show up across enterprise networks. Then you’ll get practical tips for avoiding cable messes as speeds move toward 800G and beyond.

Ready to see how these wires make the digital world tick?

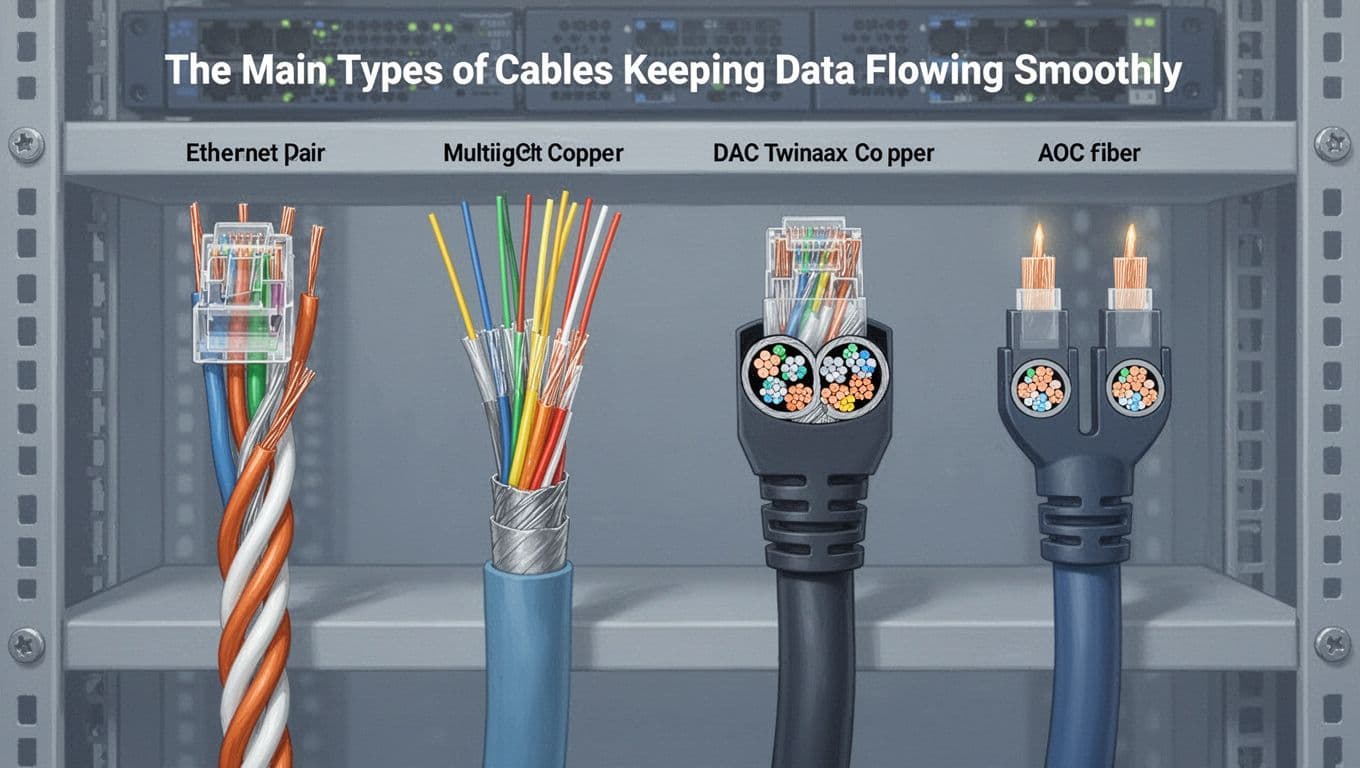

The Main Types of Cables Keeping Data Flowing Smoothly

Most data centers use a mix of copper and fiber. They pick each type based on distance, speed, and cost. Then they build an organized path so upgrades do not turn into chaos.

Here’s a quick visual of four cable types you’ll see a lot:

To choose the right one, teams usually ask three questions: How far is the run, how much speed is needed, and how dense are the racks?

A fast comparison can help.

| Cable type | Common use | Main limitation | 2026 reality |

|---|---|---|---|

| Copper Ethernet (CAT6/CAT6a) | LAN links, server-to-switch (short) | Distance and interference | Still common for office and shorter links |

| Fiber optic (MMF/SMF) | Switch-to-server, backbone, inter-building | Higher install care | Fiber stays dominant for long and dense paths |

| DAC (direct-attach copper) | High-speed short rack links | Very short reach | Still popular for 400G and 800G clusters |

| AOC (active optical cable) | Longer high-speed rack links | Power and cost per link | Used when you need more reach than DAC |

Next, let’s break down each type and when it makes sense.

Copper Ethernet Cables for Everyday Reliability

Copper Ethernet cables use twisted pairs. That twist helps cancel out noise, so performance stays stable at typical in-building distances. CAT6a often shows up because it supports high speeds and handles PoE (Power over Ethernet). PoE matters when you power phones, cameras, Wi-Fi access points, and other edge gear.

In data centers, copper still plays a role, but usually for shorter runs. For example, copper might connect a server to a top-of-rack switch when the cable length stays under about 100 meters. In office networks, copper is even more common because it’s cheap, flexible, and easy to terminate.

However, copper has limits. It can face interference over longer distances. That is why teams avoid copper for long backbone runs. They also care about connector quality, bend radius, and cable management. One poorly handled cable can cause retransmits and lost time.

Still, copper earns its keep. It’s often the best fit for day-to-day networking where distance stays short and equipment is spread out in a building. It also makes audits simpler because labeling and patching are familiar.

Fiber Optic Cables for Speed and Distance

Fiber optic cables move data with light. That means they do not suffer from electrical interference in the same way copper does. As a result, fiber fits both high speed and longer reach.

You’ll usually see two big fiber styles:

- MMF (multimode fiber) for shorter data center distances, often for within-building runs.

- SMF (single-mode fiber) for long runs, like inter-building links or metro connections.

In modern data centers, fiber also helps with density. A single fiber link can carry a lot of traffic. Then teams bundle fibers into trunks and connect them with MPO/MTP style connectors. Those connectors matter for high-count links that support fast speeds like 800G Ethernet and beyond.

Also, fiber supports dense “many fibers, short distance” designs for AI racks. Instead of running one bulky cable for one link, teams run structured trunks and manage them with patch panels. That keeps moves and adds faster.

If you want a practical view of how 800G interconnects get selected (DAC, AOC, and optical options), see 800G Data Center Interconnect Guide: DAC, AEC, AOC & Optical. It’s a helpful engineer-focused breakdown of the common choices.

DAC and AOC for Ultra-High-Speed Connections

DAC and AOC are popular in AI and high-performance environments because they connect directly between ports with less fuss.

DAC (direct-attach copper) is a twinax cable. It plugs into compatible optics or transceivers, then runs within short rack-to-rack distances. It’s often a cost-effective option for ultra-short links, like GPU-to-switch or GPU-to-GPU style layouts inside a row.

AOC (active optical cable) is similar in role, but uses fiber. It can reach farther than DAC and keeps the install lighter than some heavier optical setups. That extra reach helps when racks do not line up perfectly.

The key tradeoff is simple: DAC is usually cheaper and easier for very short runs, while AOC gives more reach when you need it. If you want a clear comparison for 2026 use cases, this guide is useful: DAC vs AOC Cables: Choosing High-Speed Interconnects for 2026 Data Centers and AI Clusters.

In practice, teams use DAC and AOC to support dense deployments with fewer moving parts. That plug-and-play feel helps during upgrades, because you can swap links without re-terminating fiber in many cases.

How Cables Connect Everything in Data Centers

A data center’s cable job is not only about speed. It’s about order. A messy network cabling plan can cost hours during troubleshooting and weeks during upgrades.

Most facilities rely on structured cabling. That means cables follow planned paths. They end in labeled patch points. Then technicians move traffic by patching, not by rerouting every time.

Also, cable layout affects thermal performance. When cables block airflow, switches and servers run hotter. That can increase power use and shorten component life.

Teams often talk about “east-west” traffic too. In modern AI clusters, servers chat constantly. That traffic pattern makes structured fiber runs and dense interconnect design a priority.

You’ll also see two common switch placement models that shape cable lengths and latency.

Structured Cabling and Patch Panels for Easy Management

Structured cabling is the organized backbone of the facility. Instead of mixing cables anywhere, it uses defined zones and pathways.

Patch panels play a big role. They act like “handoff hubs” for short patch cables. A technician can then change paths quickly by moving patch cords. That’s far easier than pulling new long runs every time you add a server.

In a well-run system, each patch point gets a clear label. That label connects to documentation, sometimes through DCIM tools or rack planning software. As a result, when something fails, you can trace it without guessing.

Structured cabling also supports growth. In many facilities, you plan for spare capacity. That way, when a new rack arrives, you connect it to the existing infrastructure without tearing everything apart.

Top-of-Rack vs End-of-Row Setups

Switch placement changes your cable patterns. It also changes how much cabling you do per rack.

In Top-of-Rack (ToR) designs, switches sit on top of racks. Because the switch is close to servers, cable runs stay short. That helps with low latency and reduces bulk in cable trays.

In End-of-Row (EoR) designs, switches sit at the row end. This can reduce the number of switches, but it often means longer cabling runs from each rack to the switch.

For a clear side-by-side look at the two designs, check Comparing Data Center Network Designs: Top of Rack (ToR) vs. End of Row (EoR).

With AI clusters, short and predictable cable runs often matter as much as raw bandwidth.

In 2026, density pushes many teams toward ToR patterns in high-speed pods. Still, EoR stays useful where cost and manageability outweigh the smallest latency differences. Either way, cable planning decides how smooth upgrades feel.

Cables in Enterprise Networks and Beyond Data Centers

Data centers focus on server-to-server traffic. Enterprise networks also move data, but the mix looks different.

In offices and campus buildings, you often rely on copper for access layer needs. Devices connect to switches, then the switches connect to the wider network. In many cases, copper is enough because the runs stay inside a building.

Between buildings and across cities, fiber takes over. It handles longer distance, higher capacity, and fewer signal problems.

So, you see a simple split:

- Copper fits short, local links.

- Fiber fits long, high-capacity links.

Local Area Networks with Simple Copper Runs

In an enterprise LAN, copper Ethernet links often connect computers, printers, phones, and Wi-Fi access points to a local switch. CAT6 or CAT6a cables are common choices.

The big reason is practicality. Copper is easy to install and terminate. It supports fast enough speeds for most office work. Plus, it works well with PoE, so one cable can handle both power and data.

Good network cabling here looks like structured paths. Cables route through conduits, trays, or ceiling spaces. Then they land at patch panels in wiring closets.

When cabling is organized, troubleshooting becomes faster. You can identify the correct port. You can also test runs without tearing up walls.

That’s why many enterprise teams treat cabling like a maintenance task. They plan labeling updates and keep documentation current.

Wide Area Networks for Long-Distance Links

For WAN connections, fiber dominates. Branch offices might connect to a headquarters over a fiber-based transport. Often, those links carry internet access too.

In addition to distance, fiber helps because it can scale bandwidth without the same noise issues copper faces. That matters when traffic includes video calls, file syncing, cloud apps, and backups.

Some WAN links are built with direct fiber between sites. Others rely on leased lines or carrier services. Either way, the cabling and termination quality still matters. Wrong polarity, bad connectors, or poor splice work can create errors and downtime.

As network speeds rise, WAN backbones also lean toward dense fiber designs. That trend is part of why cable planning keeps growing in importance.

Even in enterprise upgrades, 800G and 1.6T planning shows up in the planning mindset. For an example of how cabling trends are shifting for those higher speeds, see 800G and 1.6T Data Center Cabling Trends in 2026.

2026 Trends and Smart Tips for Cable Success

In 2026, cables face three pressures: higher speeds, higher density, and tighter operational limits. AI racks push massive bandwidth in short spaces. Cloud services push constant change.

At the same time, data centers want less waste and less rework. That’s why better cable management matters. It also explains the push for designs that support airflow and easier swaps.

You’ll also see more attention to cable monitoring. Some facilities use tools to track link performance and spot issues early.

Here’s a quick reality check: a cable is never “just a cable.” It affects power, cooling, troubleshooting speed, and upgrade time.

Riding the Wave of High-Speed and Green Innovations

Higher-speed Ethernet (including 800G and rising interest in 1.6T planning) changes how teams build. It often means more fiber per tray, more density in patching, and more careful connector handling.

Green goals also matter. Better airflow around cabling can reduce cooling energy. Efficient designs can also reduce the number of re-terminations during upgrades. Less rework means less waste.

Meanwhile, liquid cooling and high-power racks push teams to watch cable routes closely. Cables can’t just hang where they fit. They must stay compatible with airflow patterns and safety needs.

So, the trend is not only “more speed.” It’s also better fit between cabling, cooling, and operations.

Best Practices to Avoid Cable Nightmares

Cable nightmares usually start small. A missing label. A cable tray routed too tight. A bundle that blocks airflow. Then a later change makes it worse.

To reduce risk, follow a few habits that experienced teams repeat.

| Challenge | What causes it | What to do instead |

|---|---|---|

| Tangles and slow swaps | Cables have no clear path or slack plan | Plan routes, add slack, and label every patch point |

| Overheating spots | Bundles block airflow to ports and fans | Use proper spacing and routing supports, keep trays organized |

| Hard troubleshooting | Documentation and labels don’t match the real layout | Update records after every move, add color coding where needed |

| Upgrade delays | Wrong lengths or mixed connectors | Measure early, buy compatible parts, and stage spares |

If you want more practical guidance on structured approaches, this post covers common steps for avoiding issues: Optimizing Your Infrastructure: Data Center Cabling Best Practices.

Here’s the mindset shift that helps most teams. Treat cabling as a system. When that system stays clean, upgrades get faster and downtime drops.

Conclusion

Cables keep networks working, from short office LAN links to high-density data center pods. Copper handles many local connections well. Fiber takes over for long reach and dense bandwidth. DAC and AOC fill the gap for fast, short high-speed interconnects in 2026.

Just as importantly, cables succeed when the setup is organized. Structured cabling, smart patch panels, and thoughtful ToR or EoR choices reduce downtime and speed up upgrades.

So if you have an upcoming refresh, start with a cable audit. Then fix what’s messy, mislabeled, or hard to trace. Ready to see what you might improve first in your own setup?